Is N-tier architecture still relevant in the public cloud?

Classic N-tier architecture has been with us for well over a decade. But does it still have a security role to play in public cloud deployments? First a recap. We’ll use a database-driven three tier web application in our examples, as this will cover a large number of real-world scenarios.

What is N-tier and how does it help? (pre-cloud)

Pro tip: skip to the next heading if you don’t need an N-tier refresher.

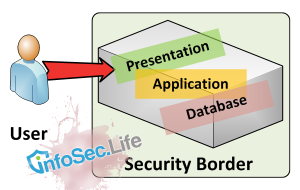

It was once common for all parts an application to sit on the same server – the web server that gives code to the user’s browser (presentation), the application (business logic), and the database (data) were all together. It was cheap, easy to implement, but could not scale horizontally. Application boundaries could be unclear, and a critical fault in any component would likely cause the entire service to fail.

But most importantly for security, it likely offered multiple attack surfaces on the one host, with no real way to prevent a cascading breach starting in the user-facing web side, ending up down at the business-facing data side. User-facing web applications are historically easiest to break into, so if an intruder gains ingress to the web application, they already have access to the same server that the database resides on. A full compromise is likely at this point, and the application security within the business logic has been circumvented – who needs to worry about circumventing application security, when you already have the database the application uses?

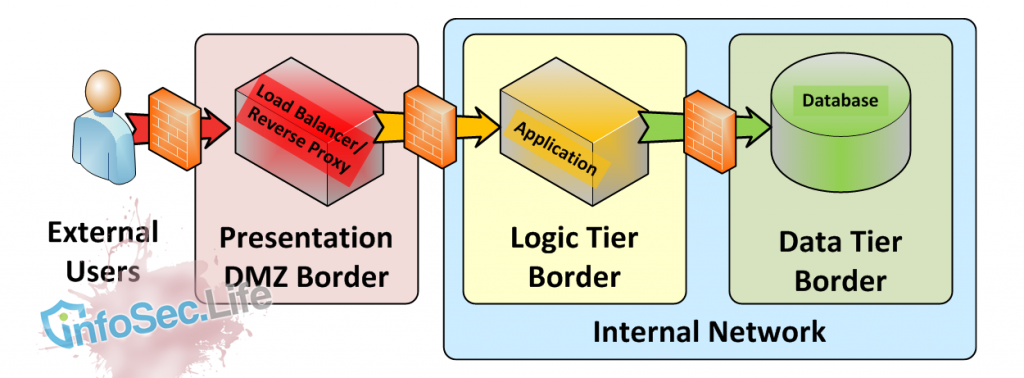

N Tier architecture segregates functions into tiers, with applications answering content requester from a less trusted tier. A firewall should be deployed between tiers to keep unwanted ingress traffic from entering each tier. As traffic progresses from Left to Right in the above diagram, it is considered more trusted.

Enter N-tier architecture. Components of the application are defined and split into firewalled tiers of like services. All the databases sit in the data tier, all the applications and related services sit in the logic (application) tier. Users are compelled by firewall rules to only access the presentation tier. This might be a reverse proxy or web server. This presentation service connects to the application on behalf of the user, crossing into the firewalled logic tier to do so. The application then calls the database, using a different protocol and crossing into the firewalled data tier.

The real security strength in N-tier is compartmentalising potential security incidents. If there is an exposure in the business logic/application, the intruder still does not have direct access to the database. Assuming a worst case scenario with no additional controls, the intruder can only replicate how the business logic was accessing the database, and this can take some time for an intruder to discover, buying valuable detection time.

Importantly, the intruder will not necessarily be able to intrude deeper into other systems, because:

- those systems are not on the same server

- firewall policy should prevent the use of additional network tools between tiers

Because each tier is independent of the others, the design can easily scale horizontally where needed and offer redundancy through multiple servers.

The security mantra for this way of working is: The network firewall controls the network security policy.

Is N-tier still relevant in a cloud deployment?

While the cloud may have made IT services more of a commodity than ever before, under the hood N-tier architecture is still relevant. It just looks a little different depending on your vendor’s base architecture. All cloud vendors offer slightly different products, but the fundamentals are the same.

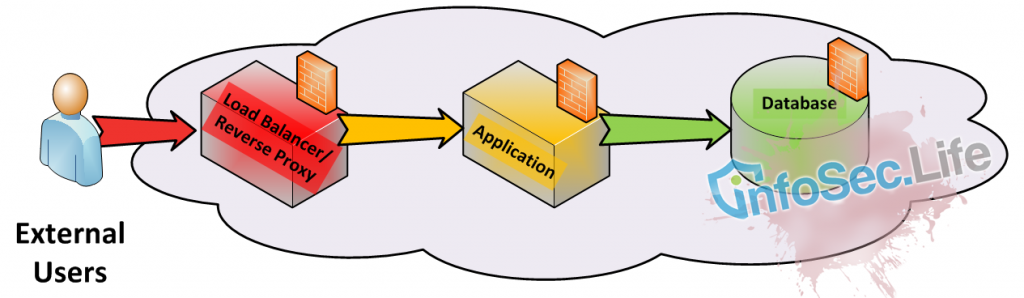

The concept of trusted and semi-trusted tiers, or networks, has kind of disappeared. There’s no DMZ to hand users off into. We no longer throw secure perimeters around entire networks of applications and databases. But presentation, logic and data tiers still exist – just as independent, interconnected nodes. The physical borders of tiers in internal networks have become abstract logical versions in the cloud. Each server can be an independent outpost, responsible for its own security border.

The security mantra for the public cloud way of working is: The host firewall controls network access to itself.

We isolate each node (server) and secure it from the network through a host-based firewall (a basic form of micro segmentation) or using vendor provided tools, such as security groups in Amazon Web Services (AWS). The difficulty and risk of managing numerous security policies for numerous hosts is somewhat lessened by utilising a single policy for what we previously thought of as each “tier”.

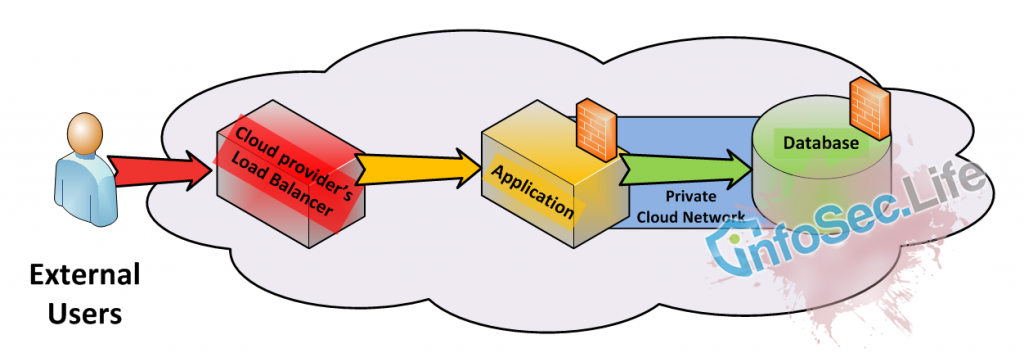

Applications may traverse shared-networks when accessing other applications, or may stay within a private network. This can be easily handled:

Option 1: A “private” Public Cloud Subnet

Some Infrastructure-as-a-Service (IaaS) providers will provision services with a private network attached (eg, AWS) or one is available on demand (eg, Rackspace). This is not an encrypted network, but it does allow internal communications between servers, separated from other tenancies by VLAN or similar technology. It’s your own subnet to use as you wish. You can transfer data between servers within the same private cloud network, without fear of another cloud tenants sharing the same subnet (cloud vendor security incidents not withstanding).

However, a VLAN is not the same as a VPN or point-to-point SSH tunnel. VLANS are convenient, but they are not secure network solutions, and this is especially true for a cloud vendor. If your data is more sensitive in nature, you may wish to run your own application-level network encryption over the top of your private network. Always conduct a full risk assessment prior to any cloud deployment decision. Knowing your impacts, likelihoods, corporate policy and risk appetite, will help help you understand what mitigation you may need.

There’s nothing stopping you deploying three subnets, placing servers within each one, and calling those subnets your Presentation, Logic and Data tiers. That may be helpful in large deployments, but for most it will be an unnecessary complication because the hosts still manage the security policy.

Option 2: Independent Virtual Hosts, no private subnet

Some vendors provide individual hosts with no default private network (eg, Rackspace by default do not provision a private network, even though the account holder can commission one). For one server’s application to communicate with another server, it must do so via a shared multi-tenant network.

This is not necessarily a bad thing – it helps form the risk profile because it reminds us that we are working from shared infrastructure, in someone else’s data centre(s) – something we may tend to overlook with the convenience of a private network.

To mitigate the risk of data interception in transit, or man in the middle attacks, the deployment of VPNs, point-to-point encryption, or application-layer encryption (eg SSL/TLS) are all valid network strategies. Deploy as appropriate for your needs.

Option 3: Replicate firewalled networks in your cloud

It would be remiss of me not to mention a fuller, more costly option: to deploy network firewalled perimeters within your cloud, closely replicating N-tier network architecture of old. However, this is moving away from public cloud and is likely only an option for very big deployments.

Conclusion

N-tier architecture still holds scaling, redundancy and security benefits in a cloud deployment. But gone are the familiar secure perimeters of internal corporate networks. Instead, security architecture must now work with independent security nodes, where each node/server is responsible for its own local perimeter. Together, these nodes make up the tiers.

Of course, you could deploy entire subnets and hosts within those, closely mimicking N-Tier architecture of old. But with appropriate inter-host encryption (risk assessment pending), it isn’t necessary in the public cloud.

Thanks for the overview. We’ve been deploying self contained IaaS instances and I suspect we need n tier to scale properly. Wish we’d thought about this before deploying

Trey, if your app happily serves its purpose on a single Infrastructure-as-a-Service instance, then there is no need to reinvent the wheel. If it runs out of CPU and RAM, add more via the cloud console. Sometimes we need to let applications run their natural life rather than re-architect them (assuming its not presenting a security risk for you).